Artificial Intelligence (AI) and Big data have been buzzing a lot lately, and these two giants have changed the face of analysis. These technologies will continue to lead the way in making predictions, and the heart of these tools is data-driven analysis which is the future. More than ever, acquiring data and analysis is easier and more accessible. They are allowing organizations of all sizes: large, medium, small, and even start-ups, to maximize the potential of these technologies.

AI and Big data are two separate disciplines, yet these can not function on a standalone basis. Both have an interdependent relationship, and each cannot work without another.

We shall explore this connection between big data and AI in our article. We will first understand these tools separately, i.e., what is Artificial intelligence and what is big data, then move on to understanding the role of Artificial intelligence in Big Data and vice versa. Next, we will closely examine the relationship between Big data and AI, need of big data, how these two benefit businesses, how AI benefits Big Data, how AI improves insight into data, and their examples.

Let’s begin by explaining Big data and artificial intelligence.

Big Data and AI: Concept Overview

What is Big Data?

As the word ‘big’ means large, big data refers to vast amounts of non-handleable or non-manageable data by traditional data methodologies. Big data is a collection of data from a variety of sources and is complex. The need for big data arises from several factors, and it has become increasingly crucial across various industries.

Also Read: What is Big Data – Types, Tools, and more

The following five characteristics define it and are also known as “the five V’s of big data”:

Volume

Volume refers to the size and amount of data. It helps to answer the question: how much data do we have? Big data is an enormous volume of data. Statista noted that by 2025, global data creation is forecasted to grow by more than 180 zettabytes. The data is growing lightning due to the Internet of Things (IoT), cloud computing traffic, social media, and mobile traffic.

Variety

The various formats in which the data is captured refer to structured, semi-structured, and unstructured data, which are forms of heterogeneous data.

- Structured data: Structured data is organized data in which the data has a defined length and format. This data can be tabulated in a relational database.

- Semi-Structured data: Semi-structured data is semi-organized, meaning this data does not fall into the norm of rows-columns data yet has a structure. For instance, log files are semi-structured data.

- Unstructured data: Unstructured data has no defined form and can not be tabulated in a relational database or traditional format of rows and columns. Data such as text, audio, images, and videos are unstructured.

Velocity

Velocity is the speed or rate at which the data is received, processed, stored, and made accessible. For instance, the number of phone calls received in an hour by a customer care representative, the number of Twitter posts, and the rate at which a user interacts or engages with an ad.

Veracity

Veracity refers to how accurate the data is. It checks the integrity and quality of the data. Is the data complete, or is something missing in the data? Veracity helps handle the “garbage in and garbage out” policy.

Value

Value refers to how useful the collected data is. Value helps to understand if the data is good enough for a company to draw insights and make effective decisions.

Other V’s are as follows:

- Variability : Variability captures the inconsistency present in the data flow, meaning if the nature of the data is changing or not. This helps to convey that if the meaning of the data changes frequently, then there is variance in the data.

- Visualization : Visualization is essential to depict the findings and results graphically.

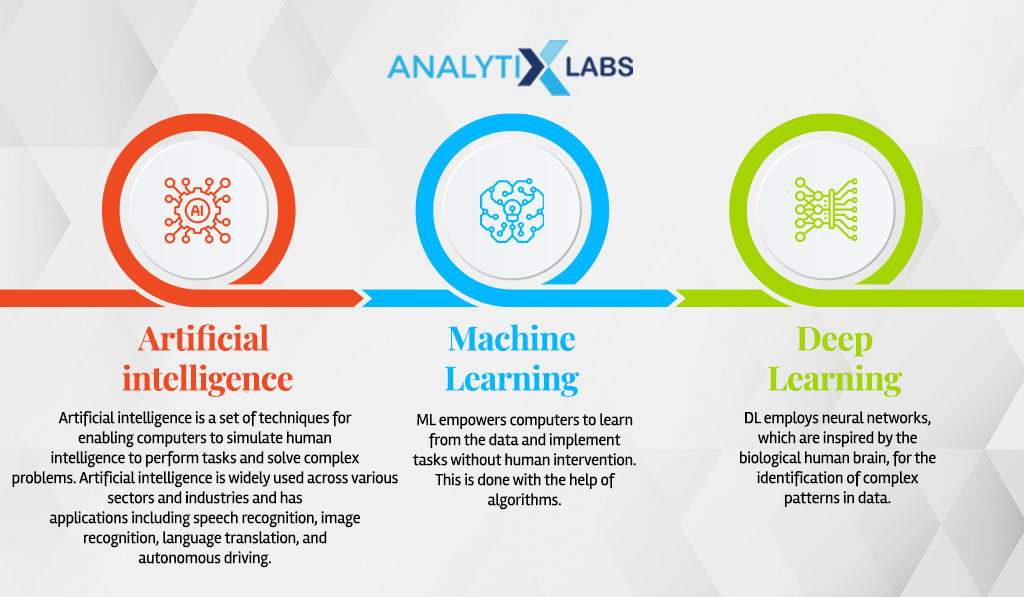

Artificial Intelligence

It is a set of techniques for enabling computers to simulate human intelligence to perform tasks and solve complex problems. Artificial intelligence is widely used across various sectors and industries and has applications. It includes speech recognition, image recognition, language translation, and autonomous driving.

The two components of Artificial Intelligence are Machine Learning and Deep Learning.

- Machine Learning: ML empowers computers to learn from the data and implement tasks without human intervention. This is done with the help of algorithms.

- Deep Learning: DL employs neural networks inspired by the biological human brain to identify complex patterns in data.

Also read: Machine Learning vs. Deep Learning

Role of Artificial Intelligence in Big Data

Artificial intelligence is a different subject from Big data, it is not a segment of big data, yet it operates on data. Data is the fuel for artificial intelligence. Artificial intelligence is the technology that was designed to mimic human intelligence.

The role of artificial intelligence in big data is that artificial intelligence facilitates stages of the big data workflow. The steps include aggregating, storing, and retrieving varied data types from disparate sources.

Artificial intelligence in big data can accomplish the following tasks:

- Determine data types of the variables or fields

- Data cleaning or preprocessing

- Data exploration

- Data visualization

- find relationships between attributes and datasets

- Feature selection

- Feature engineering

- Identify patterns in the data

Artificial intelligence plays a crucial role in detecting trends or patterns in the data. This is attributed to the AI’s ability to learn from the data. This helps in use cases such as providing feedback to customers.

How that works is that using AI can recognize the outliers present in the data. This helps to identify the significant parts of pieces of the customer feedback or survey, leave the un-required parts, and make the adjustments as per the need.

Artificial intelligence in big data is also significant. With artificial intelligence, such a huge amount of data would be valuable. Unless it can use the data and drive insights out of the data by molding it into intelligence.

Data and artificial intelligence are closely intertwined and dependent on each other. AI and machine learning help address common data issues, including data quality and value. High-quality data is crucial as it loses its importance and meaning when its quality is low.

Understanding data requires considering the context of a business problem to determine its relevance. Artificial intelligence, along with its subsets, ensures data quality.

Data scientists spend about 80% of their time cleaning and preparing the data. So, artificial intelligence acts as the litmus test for the data. It is not worth it if the data does not pass the litmus test set by artificial intelligence methodologies. Machine learning approaches for data checks are:

- Check the data types of columns

- Check for spaces in column names

- detect missing or null values

- Check for duplicate entries

- determine outlier values

- Transforming variables

- Scaling and Normalization of data

Machine learning tools also employ treatment for null values and remove duplicate records and outliers. It corrects the data types, removes trailing spaces from column names, and preprocesses the data so it can be ingested into the algorithms for creating the models.

Role of Big Data in Artificial Intelligence

Artificial Intelligence thrives on data. It requires data to develop its function consistently, and that’s how big data plays its role in artificial intelligence. Big data in AI helps in improving the results’ efficiency.

Without data, AI technology can not do much. It will remain as a technique. This is relevant as more data is available, and more AI systems have data accessibility. The more the data allows machines to learn, the more iterations help achieve better accuracy and efficiency without human intervention.

Analyzing large datasets developed many and varied practical use cases for artificial intelligence. This led to opportunities for deep learning and machine learning.

Organizations of all sizes: startups and established get data from various kinds of sources. These organizations need ways to store data and manage and process it, and they use the upcoming big data techniques for this purpose.

As we have seen in the five Vs of Big Data, these Vs enable the machine learning and deep learning models to be more precise, and this way, big data in AI plays its role.

How are AI and Big Data related?

Artificial intelligence and big data have a synergistic relationship. Artificial intelligence needs large volumes of data for better model training purposes. The data would help make more accurate predictions, and big data analytics ropes in AI tools for better data analysis.

Data is the common theme around artificial intelligence and big data, yet both have distinctive purposes. Let’s see what these components individually do:

Purpose of Big Data

Big data receives a huge amount of data from various sources. Big data plays the role of input receiver. Eventually, this data requires cleaning, munging, data processing, and normalization for it to be instrumental.

Purpose of Artificial Intelligence

Artificial Intelligence is the next step in this process. It has a series of steps through which data goes to get cleaned and get prepared to be ready for algorithms. Following this, the processed data is used for model-building purposes.

Those models are programs where the machine learns to display its power by taking inputs from the data and learning from it as humans do. The models are evaluated, and results are validated via the given methodologies.

Data and artificial intelligence are inseparable partners, each relying on the other. While data traditionally provided factual information and statistics, its role has expanded significantly.

Artificial intelligence has breathed new life into data, opening up vast possibilities. AI empowers us in numerous ways, whether it’s suggesting the ideal car to buy, recommending the next movie to watch, or generating automated text.

Artificial intelligence relies on big data, which fuels its capabilities. AI enables us to work with structured numerical data and process unstructured data like texts, images, audio, and videos. Their efficiency and accuracy improve as AI techniques analyze and process more data.

The relationship between AI and big data is mutually beneficial, driving technological advancements. AI leverages big data techniques, while big data fuels AI’s capabilities. Together, they enable businesses to make predictions and recommendations and identify future trends across various industries.

In the past, businesses relied on rule-based methods for reporting, which lacked adaptability. Machine learning methods, backed by statistical models and data analysis, enable models to recognize trends and patterns.

To harness the power of big data, businesses can leverage AI and apply machine and deep learning algorithms. This combination allows for predictions, recommendations, and optimization in diverse domains such as science, commerce, finance, media, entertainment, and technology.

How does AI benefit Big Data?

Artificial intelligence benefits Big Data in the following ways: There are 3 core ways that AI helps big data, and this is through:

-

Augmenting Data Analytics

One of the challenges that companies face is to manage big data effectively. SQL and similar languages are used for the extraction of data. Traditional analysis methods are inefficient and consume a lot of time and energy in deriving conclusions.

Artificial intelligence and machine learning are preferable tools for data analysis as these are less labor-intensive data analytics. Once combined with vast volumes of big data, the technologies are more predictable and prescriptive analytics.

-

Higher processing speed

Artificial intelligence allows us to analyze data faster. It provides greater speed, and that is highly favorable for data processing. We can manually analyze and manage data but can not beat the tools’ speed.

This intelligence enables making decisions, deriving meaningful findings, and opening opportunities for organizations to utilize their resources efficiently.

-

Removal of data issues

There are various problems with data, such as collection, storage, management, and data processing. The crucial challenge is data quality. The models are built on this data, so it’s paramount that we ensure only relevant pieces of information are processed. Or else we would get garbage in, garbage out.

Also Read: How to Minimize Dirty Data: A Comprehensive Guide

Machine learning aids this problem by detecting and removing missing or null values and checking and removing outliers present in the data. Machine learning techniques can also normalize, standardize and transform the data, preparing it for model ingestion.

-

Detection of Patterns

The modus operandi of Artificial intelligence is its ability to mimic human intelligence, learn from the data, and execute tasks by itself. This allows the models to recognize patterns in the data, such as in a text document, find common themes or topics, and recognize the sentiments of the feedback or messages.

In image data, distinguishing a cat from a dog determines what object is present in the image. All these are possible due to the competencies of artificial intelligence.

Also Read: What is Image Segmentation?

How does AI improve insight into data?

The data is growing at flabbergasting speed, it is projected to grow to 180 zettabytes by 2025. With such an amount of data, artificial intelligence, and its methodologies are needed to make sense of this vast data.

Machine learning and deep learning tools are employed, and these bank upon the big data as these technologies can learn from the data. The models built using machine learning and deep learning algorithms iterate over time to achieve the optimum solution and evolve.

These learned model results offer insights and value to make meaningful business decisions.

Initially, looking at data, we could only state the current status of the data, which would reflect how the data looks today. In other words, it would indicate: “This is what has occurred.”

With AI and machine learning, one can predict, based on the big data, “what will happen in the future” and even prescribe solutions for obtaining sustainable and optimum results. The models can also be designed to perform specific tasks or actions based on prescriptions and predictions.

AI is also improving insights into data by developing new ways to analyze it. Earlier, driving insights was labor-intensive as the developers would need to query or use Structured Query Language (SQL) for analyzing data. Also, earlier, the analysts would have to create statistical models to gain insights into the data.

Now, the statistical models are powered by automation and computer technologies. This has transformed the data analysis game and is a less laborious task. The data analysis is also less-time consuming now. In the past, analyzing data could have taken weeks; now, it takes only one or two days.

How AI and Big Data benefit businesses

Businesses across different sectors and industries are benefiting from artificial intelligence and big data in the following ways:

-

Bird’s-eye view of the business

Companies become smarter in their approaches to get a complete scenic view of their businesses and customers by adopting analytical tools. Earlier, companies were statically storing data and hence would take longer to generate and update the reports manually. The improved technology of Big data and AI have made this routine easier.

Companies utilize automated and distributed analytical methodologies and store the data in data lakes and marts. These integrated solutions collect data from diverse sources. This allows them to generate reports highlighting KPIs and give a better understanding of business and users.

-

Improved prediction and optimization prices

Businesses used to forecast the sales value based on the previous year’s sales value. Although, due to several parameters such as changes in the trends, COVID, or other miscellaneous variables, price estimation, and optimization using the conventional methods is challenging.

Big data helps businesses by identifying trends early and also how these will impact future prices with greater chances. Retail businesses employing Big Data and Artificial Intelligence see improved seasonal predictions wherein the errors reduce by almost 50 percent!

-

Consumer behavior analysis

Along with quantitative analysis, Big data, and AI also offer qualitative analysis. It aids in answering questions related to metrics such as user needs, customer retention rate, product and service usage, and purchase frequency.

These techniques apply tools to understand the behavioral patterns of customers and provide key insights to businesses. They use it to identify trends, acquire new customers, improve their conversion and retention rates, and improvise their products and customer satisfaction. Using AI and big data, businesses can automate customer segmentation as well.

-

Risk management

A business must prepare for the future and its longevity. AI data-driven models and big data technologies enable businesses to recognize and mitigate risks, including customer and market risks. Using the tools, companies can also prepare themselves for obstacles arising from predicted events such as natural calamities.

Big data, as is a collection of extensive from divergent sources, provides discernibility to plausible risks. It aids in quantifying the exposure to risks and losses and also to changes. Organizations take information from many sources and collaborate with it to offer more awareness about situations and a greater understanding of allocating resources to manage and handle emerging threats.

-

Anomalies detection

Big data-powered analytics empowers businesses to detect any unusual occurrences or discrepancies in the systems by analyzing their behavior. Big data stores data in large volumes of all kinds, including logs, transactional data, excel, CSV files, and data captured via sensors and devices.

Alos Read: How to Read CSV Files in Python

Coupled with this data with AI, we get approaches for identifying, preventing, detecting, and mitigating possible fraudulent activities. For instance, sensors pick up minute details that help define benchmarks and ranges and identify any anomalies beyond the range.

Also Read: Ultimate Guide to Anomaly Detection: Definition, Examples, and Techniques

Examples of AI and Big Data

Big Data and Artificial Intelligence use cases are prominent across divergent sectors and industries. Let’s look at some of the applications mentioned below:

Starbucks’s AI-embedded personalized email tool

Starbucks has been leveraging the power of personalized emails. It uses the potential of big data with natural language processing (NLP), a segment of AI, to send personalized emails.

Starbucks produces over 400,000 hyper-personalized weekly emails with different variants highlighting promotions and offers. This real-time personalization engine of Starbucks has optimized the performance of digital marketing campaigns instead of drafting a dozen emails every month.

Technified platforms

The capability of Artificial Intelligence systems to recognize the voice and natural language processing power is helping the daily life of people far easier. Today, mobile platforms actively promote the simplicity of voice search to help users accomplish tasks more easily.

Tech powerhouses such as Google, Amazon, Netflix, and Microsoft have AI and big data as the pillars behind their services. It is easier to imagine our lives with Google Maps, which guides us safely to our destination in any unknown surroundings. When Google announced immersive view for maps, it was like adding one more feather to its AI innovations.

Smart speakers like Google Home, Amazon Echo and Alexa, and Microsoft’s Siri can communicate and perform desired tasks with us. When programmed to understand human speech, the tone of speech, and modality, these machines adjust and implement tasks. Speakers behind the scenes also employ artificial intelligence techniques for understanding voice and generating answers.

Netflix applies machine learning algorithms to offer users personalized recommendations for movies based on their previous watch and movies preferred by other users with similar tastes and interests.

HealthCare

Big data and AI have also improved the healthcare sector by enabling it to offer fast and cost-effective treatments. With the help of real-time data and analytics, hospitals have witnessed a decrease in patients’ length of stay.

Doctors also can quickly diagnose patients and save lives using intelligent innovations such as virtual diagnosis, telemedicine, and robotic surgeries. Additionally, doctors apply evidence-based medicine based on the data gathered from sensors and millions of cell phones.

Financial Firms and Market

Financial firms and banks have deployed chatbots as their primary point of contact for customer support and care. Predefined options and predictive text in messages support customer queries in these virtual text agents.

The Securities Exchange Commission (SEC) applies natural language processing (NLP) methodologies and network analytics. This helps in monitoring illegal transactions in financial market activities. Trading data analytics is used for the activities such as below:

- high-frequency trading

- decision-based trading

- fraud red flags

- card fraud detection

- archival and analysis of audit trails

- risk analysis

- predictive analysis

- reporting enterprise credit

- customer data transformation

Natural Resources and Agriculture Sector

The combination of AI and Big Data enabled fast analysis by applying predictive modeling techniques for analyzing huge geospatial data, temporal data, graphical data, seismic interpretation, and reservoir characterization. The analysis helps the government with environmental conservation.

AI-Big data technology facilitates farmers in counting and monitoring their products in each phase, from growth to maturity. AI tools such as satellite systems or drones help detect the soft or weak points in large acres of land early before it spreads to other areas. This has widened the monitoring abilities of agricultural organizations.

Conclusion

Artificial Intelligence and Big Data have a deep-rooted relationship that is inseparable. Both of these go hand-in-hand, and together these tools have disrupted how the world and we operate daily. Big data empowered with AI capabilities are shaping the sectors’ future across industries and providing businesses with valuable data to make informed decisions. These intelligent tools will continue to evolve and aid us in making sense of the raw collected information.

FAQs

- How does Big Data influence AI?

Big data influences AI by providing a wide scale of data. AI iterates over this data to learn and improve the decision-making processes. Big data also considers the key facets leading to improved decisions and influencing AI processes.

- Is Big Data a branch of AI??

Big Data is not a branch of AI. They have a symbiotic relationship, i.e., dependent on each other. Storing and faster processing of massive data using analytics tools and AI attribute big data.

- Is Big Data and AI the same?

Although big data and artificial intelligence are not the same, they are related. Big data provides AI with enormous volumes of data to analyze. Artificial Intelligence (AI), Machine Learning, and Deep Learning are among the analytical techniques big data use.

- AI for Videos

- Spark for Data Science and Big Data Applications

- A Detailed Insight into Structured Data in Big Data

- Big Data Technologies That Drive Our World

- What is Big Data Architecture, Its Types, Tools, and More?

- AI Project Ideas

- How Do Artificial Intelligence & Different Components of AI Work?

- Guide on how to learn AI and Machine Learning

- How To Become An AI Engineer?

- Understanding the Basics of Data Veracity in Big Data

![Understanding The Role of AI in Big Data [and Vice-versa] ai and big data](https://www.analytixlabs.co.in/blog/wp-content/uploads/2023/05/Untitled-design-1-1.png)